**Unveiling the Codex API: From Concept to Your First Dynamic AI (Explainers & Common Questions)**

Embarking on the journey of understanding the Codex API can feel like decoding an ancient scroll, but its core concept is elegantly simple: it's a powerful programmatic interface that allows developers to integrate advanced AI capabilities, primarily natural language processing and code generation, into their applications. Think of it as a bridge between your software and a highly sophisticated AI model trained on an immense dataset of text and code. This API empowers you to generate human-quality text, translate languages, answer complex questions, and even write functional code snippets in various programming languages – all with simple API calls. The beauty lies in its accessibility; you don't need to be an AI expert to leverage its power. Instead, you focus on what you want the AI to do, and the API handles the underlying complexity of the large language model.

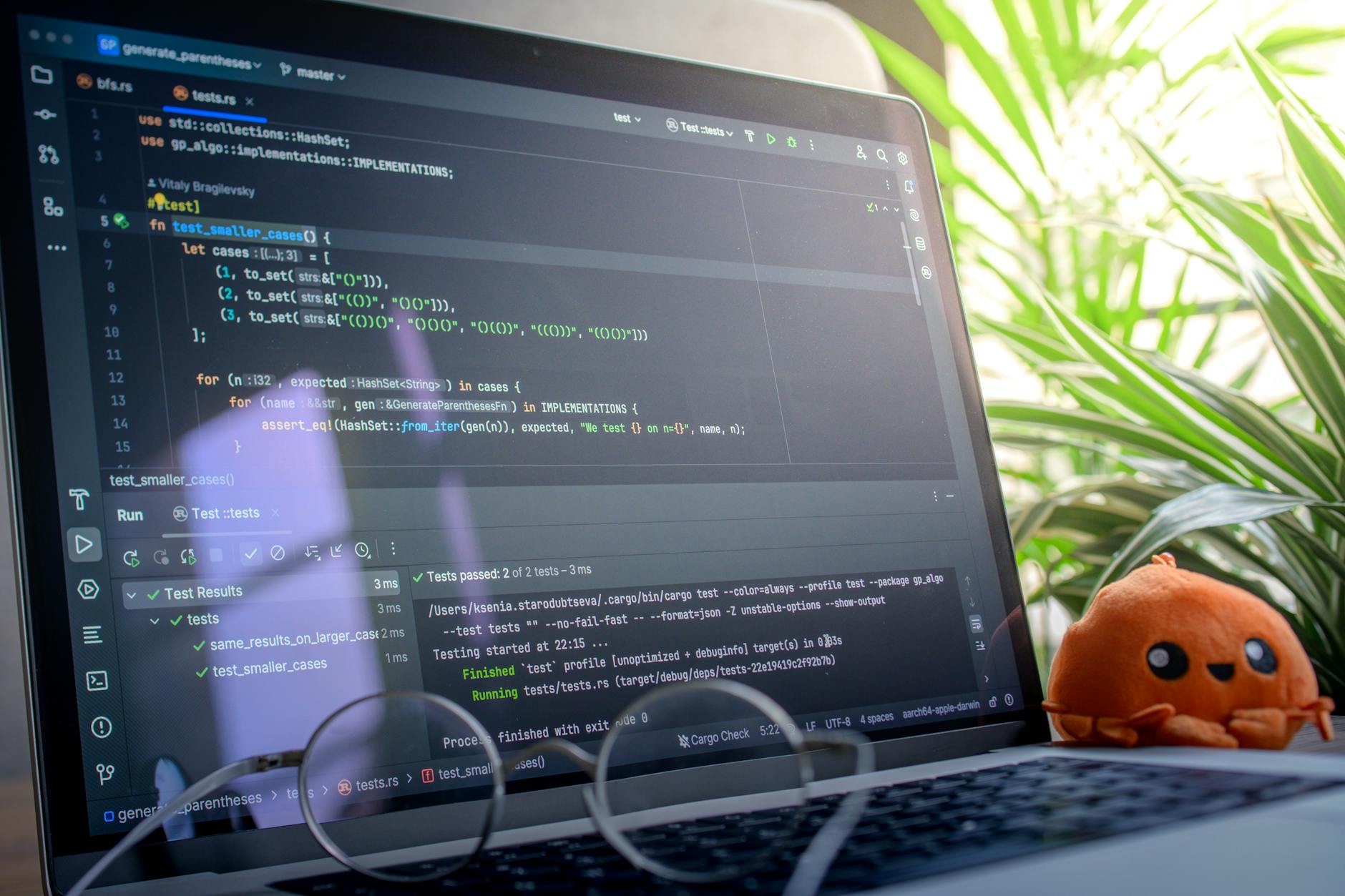

Moving from the abstract concept to your first dynamic AI integration with the Codex API is a surprisingly straightforward process, often beginning with a simple 'Hello World' equivalent. Initially, you'll need to obtain an API key and understand the fundamental concepts of making HTTP requests to the API endpoints. Key elements you'll encounter include

- Prompts: The input you provide to guide the AI's generation.

- Parameters: Settings like 'temperature' (creativity) and 'max_tokens' (output length) that control the AI's response.

- Responses: The AI-generated output, typically in JSON format.

Developers can now use GPT-5.2 Codex via API to integrate cutting-edge language AI into their applications. This powerful model offers advanced text generation, code completion, and problem-solving capabilities, opening up new possibilities for intelligent software development. Its accessibility through an API streamlines the process of leveraging its sophisticated features for a wide range of uses.

**Beyond the Basics: Practical Tips & Advanced Techniques with GPT-5.2 Codex (Practical Tips & Common Questions)**

Stepping beyond the foundational AI content generation, mastering GPT-5.2 Codex involves a deeper understanding of its nuances and capabilities. To truly excel, consider refining your prompt engineering skills. Instead of generic requests, try providing more context: specify desired tone, target audience, and even preferred sentence structures. For instance, rather than just “write about SEO,” try “write an engaging, beginner-friendly blog post for small business owners on the importance of long-tail keywords, using a conversational and encouraging tone.” Experiment with few-shot prompting, providing a couple of examples of the desired output before your main request. This technique can significantly improve the quality and specificity of generation, especially for complex or highly specialized topics. Furthermore, don't shy away from iterative prompting; generate a draft, identify areas for improvement, and then provide targeted follow-up prompts to refine the content. This collaborative approach unlocks the true potential of GPT-5.2 Codex.

Beyond crafting superior prompts, optimizing your workflow with GPT-5.2 Codex involves incorporating advanced techniques and addressing common practical questions. A frequent concern is maintaining factual accuracy. While powerful, AI can sometimes hallucinate or provide outdated information. Always cross-reference critical data points with reliable sources. For long-form content, consider breaking down the task into smaller, manageable chunks. Generate an outline first, then tackle each section individually, ensuring logical flow and coherence. Another common query revolves around avoiding repetitive phrasing. To combat this, experiment with varying prompt structures and explicitly request diverse vocabulary or sentence structures. For instance, you might include a directive like “ensure varied sentence beginnings” or “avoid repeated adjectives.” Regularly reviewing and editing the AI-generated output for originality, tone, and accuracy will always be crucial, even with highly advanced models like GPT-5.2 Codex.